When AI Shapes What We Believe

As a branding content curator, I urge designers, product teams, and leaders to read this urgent analysis of AI influence. It reveals how algorithmic recommendations reshape judgement, amplify bias, and degrade human decision-making over time.

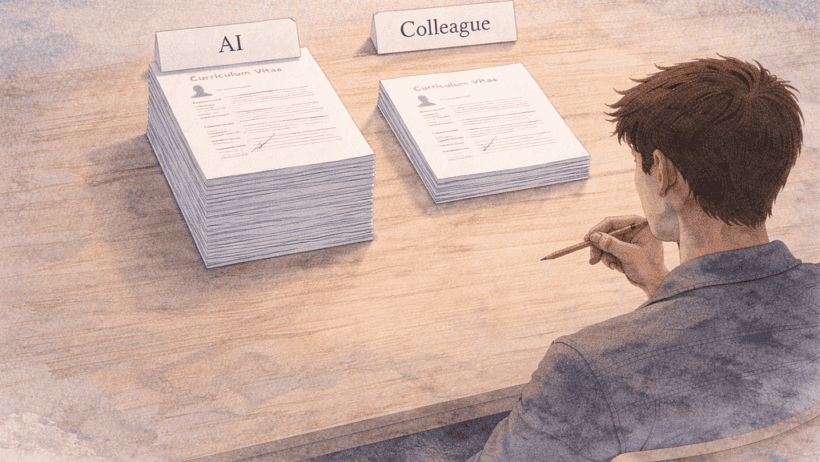

Grounded in rigorous studies from Nature and experimental psychology, the piece explains why people trust machines more than colleagues. It shows how repeated interactions cause small errors to compound, making bias invisible and persistent.

For brand strategists and UX designers, the implication is clear. You must design interfaces that expose uncertainty, encourage verification, and prevent passive deference to recommendations.

This post gives practical framing, research references, and design thinking to tackle interactional bias. Read it, then rethink how your products invite users to trust machine advice.

Essential reading for anyone shaping product trust, hiring systems, or conversational interfaces. The author synthesises evidence and design implications into clear, actionable guidance that teams can apply immediately.

It balances urgency with practical solutions, offering concrete patterns and checklist items designers can test. Read this to avoid building systems that quietly erode fairness and brand credibility.

A must share, discuss, and act upon within every product team focused on ethical trust, right now.

Source: uxdesign.cc